Will AI steal your job?

The honest answer is:

we don't know yet

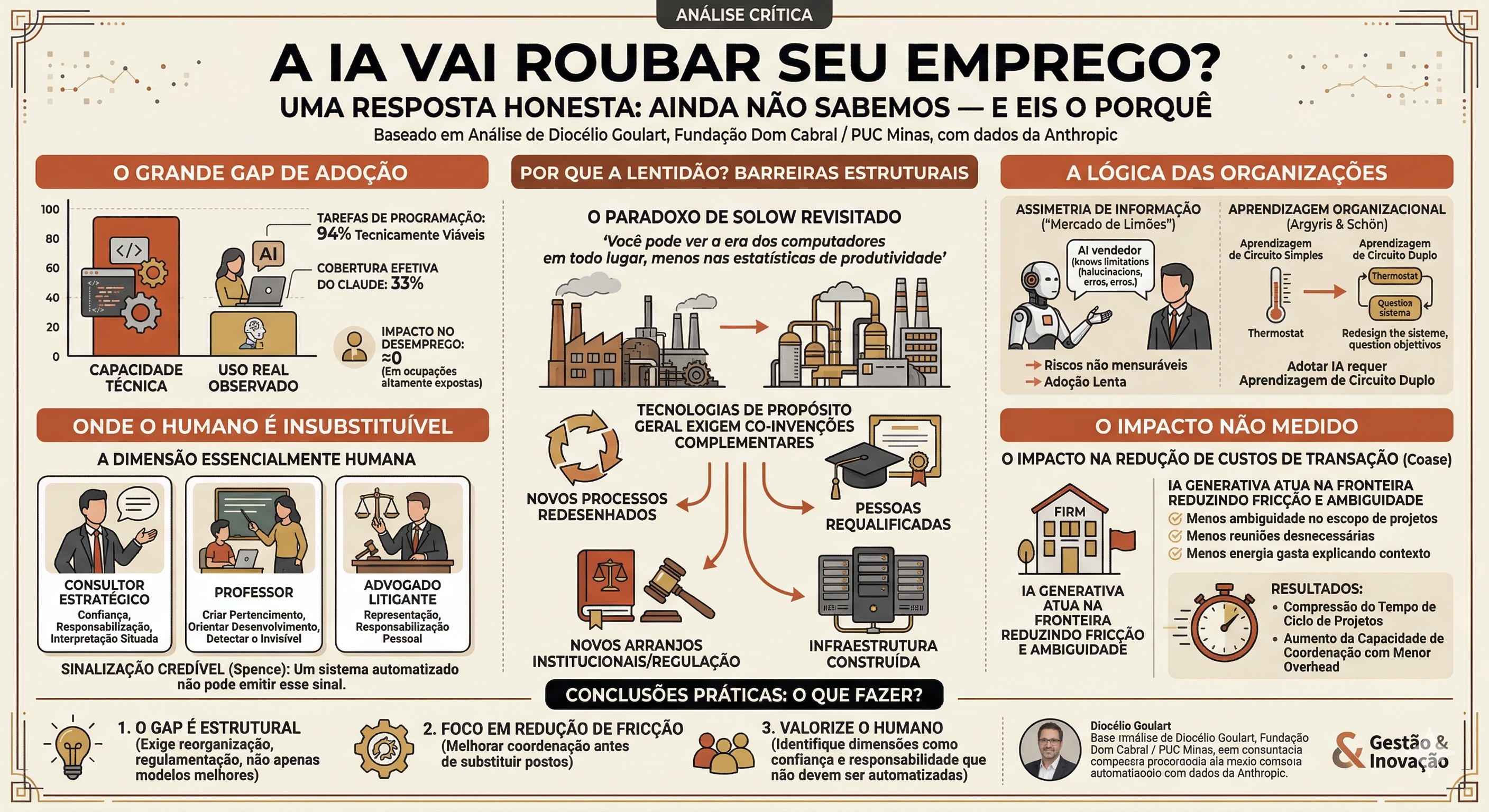

A new report from Anthropic measures, with unprecedented rigor, how much AI is actually used in professional contexts. The gap between what it could do and what it does is enormous — and that tells us something important about technology, organizations, and work.

In March 2026, Anthropic — the company behind the Claude language model — published a report titled Labor Market Impacts of AI: A New Measure and Early Evidence. The text proposes a new metric, called observed exposure, to measure how much generative AI is, in fact, penetrating daily work. The result is surprising: even in the most technically exposed occupations, the actual use of AI is still very far from what would be theoretically possible.

This gap between capability and adoption is the starting point for a deeper reflection. Why does technology advance quickly while transformations in work proceed slowly? The answer lies not in the models, but in how organizations, markets, and people actually function.

01 —The paradox Solow had already seen

In 1987, economist Robert Solow wrote a phrase that became classic: "You can see the computer age everywhere but in the productivity statistics." Computers were transforming offices, banks, industries — but macroeconomic productivity numbers weren't moving.

Anthropic's report repeats this paradox on a smaller scale, but with disconcerting clarity. The company has access to real usage data of its own model in professional contexts, and what it found is a 61-percentage-point gap between what AI can do and what it effectively does in the field of Computer and Mathematics. For those who follow the narrative that "AI will transform everything in a few years," this data deserves a pause.

General-purpose technologies only produce systemic effects after complementary co-inventions develop: new processes, new skills, new institutional arrangements.

— Bresnahan & Trajtenberg (1995), revisited in light of 2026Electricity arrived in American factories at the end of the 19th century. It wasn't until the 1920s that factories were redesigned to truly leverage it, with individual electric motors on each machine replacing the steam belt systems. Generative AI is a general-purpose technology. And general-purpose technologies require complementary co-inventions before producing systemic returns — redesigned processes, reskilled people, adapted regulations, built infrastructure.

The report acknowledges this in passing, listing "gradual adoption, legal restrictions, and human verification steps" as barriers. But it treats these factors as temporary frictions that technical progress will eliminate. This assumption needs to be challenged.

02 —The problem of who knows what AI actually does

When you consider buying a used car, you're at a disadvantage: the seller knows the vehicle's history, you don't. Economist George Akerlof called this the market for lemons — and showed that information asymmetry can paralyze entire markets.

With AI in organizations, the logic is similar. Companies that develop language models know their limitations in depth: hallucinations, errors in multi-step reasoning, erratic behavior outside training patterns. The manager who decides to adopt AI in their HR, legal, or finance department has access to a fraction of this knowledge.

The problem of adverse selection in AI adoption: early adopters take risks they cannot fully measure. Those who wait for external evidence delay real gains. The aggregate result is slower adoption than isolated technical potential would suggest.There is also the problem of what happens after adoption. Once AI is integrated into the workflow, the risk of insufficient supervision arises. The report itself records a revealing fact: the task of authorizing prescription refills and providing prescription information to pharmacies is classified as technically viable for LLMs — but it does not appear in Claude's actual usage data. This is not due to a lack of technical capability. It is because doctors, pharmacists, and health regulations do not relinquish human accountability in this process. And rightly so.

This data is not a flaw in the metric. It is a lesson about what drives technological adoption in real life.

03 —Organizations don't adopt technologies, they reconstruct them

Chris Argyris and Donald Schön distinguished two types of organizational learning. In single-loop learning, the organization corrects errors within existing parameters — like a thermostat regulating temperature. In double-loop learning, it questions the parameters themselves: why are we doing this this way? What objectives are we truly pursuing?

Effectively integrating generative AI into organizational workflows almost always requires double-loop learning. It's not enough to give an analyst an AI assistant and ask them to do the same job faster. It's necessary to rethink which tasks still make sense, how processes need to be redesigned, which responsibilities should be redistributed — and who is accountable when something goes wrong.

Cohen and Levinthal introduced the concept of absorptive capacity: an organization's ability to recognize, assimilate, and apply new knowledge. This capacity strongly depends on the knowledge the organization already possesses. Companies with a strong technical foundation in computing absorb AI with lower costs. Companies without this foundation need to build it before reaping benefits — and that takes time, investment, and tolerance for error.

This partly explains why the report finds the most exposed workers concentrated in highly skilled technical occupations: programmers, financial analysts, advanced-level customer service representatives. These professionals and the organizations they work for have the absorptive capacity to deal with the technology. The others are not immune to change — they are at a different stage of the learning curve.

04 —What is essentially human is not just difficult to automate — it is valuable because it is human

There is an argument the report does not make, but which is most important for understanding the limits of the "task coverage" metaphor. In many occupations, the human dimension is not the means by which work is done — it is the end product itself.

- A strategic consultant does not sell analysis. They sell trust, accountability, and interpretation situated in a specific organizational context they know intimately.

- A teacher does not transfer content. They create belonging, guide development, detect what is not on the surface of learning.

- A litigation lawyer does not process legal information. They represent their client before institutions that demand personal accountability — and are responsible for it.

Removing the human from these equations is not just technically challenging. It is economically counterproductive in contexts where the professional's humanity is a constitutive part of the value delivered. Economist Michael Spence showed that in markets with information asymmetry about quality, human presence functions as a credible signal of commitment. An automated system is structurally incapable of emitting this signal — not because it is less technically capable, but because it cannot be held accountable.

05 —The impact no one is measuring: less friction, more clarity

The entire debate about AI and work revolves around one axis: job displacement or not. But there is a potentially more immediate — and empirically more treatable — channel of impact that was left out of the report and much of the literature.

In 1937, Ronald Coase asked why firms exist. The answer he gave was: because coordinating through the market has transaction costs — costs of communicating, negotiating, verifying, monitoring. The firm internalizes these transactions when it is cheaper to do so internally.

Generative AI seems to operate precisely on this frontier. What I have observed in teams that adopt it more maturely is less about producing faster and more about:

If this is empirically confirmed, the impact of AI would not primarily manifest as job displacement, but as compression of project cycle time and increased coordination capacity with less overhead. Smaller teams might be able to deliver what previously required larger teams — not because people were laid off, but because friction between them decreased.

Measuring this phenomenon would require metrics not used in the report: production density per person, project cycle speed, quality of intra-organizational communication. It is an urgent and underexplored research agenda.

What to do with all this?

Anthropic's report is methodologically honest. It rigorously finds an absence of systematic impact on unemployment in the most exposed occupations since the launch of ChatGPT. This does not mean that AI will not transform work — it means that this transformation obeys logics that go far beyond the technical capabilities of the models.

Three practical conclusions emerge from this reading:

- The gap between theoretical potential and actual use of AI is, to a large extent, structural — and will not be solved merely with better models. It requires process reorganization, building absorptive capacity, and regulatory adjustment.

- Organizations that adopt AI to reduce friction and improve coordination will likely reap gains before those that adopt it to replace jobs. The most immediate impact may be on communication quality, not team size.

- There are dimensions of human work that should not be automated — not due to technical limitation, but because the professional's humanity is a constitutive part of the value delivered. Identifying these dimensions is as important as measuring technical exposure.

The question is not whether AI will transform work. It is when, how, for whom — and at what cost. Answering this honestly requires precisely the kind of humility that the report's authors demonstrate with the data, but which is still lacking in theory.